Thanks to the hard work from Andrea Belloni @anbello ,@chobitsfan , @SubMishMar and with the help of Randy @rmackay9 , we are now able to experiment with Vision Positioning and going toward more advanced system like Visual Odometry and Visual SLAM.

I present here an experiment that can be relatively easy to replicate with an off-the-shelf inexpensive Micro Drone that can be used safely in an indoor space: the Skyviper Journey. Just a friendly reminder before you start, this is advanced stuff and you need to know ROS , Linux, Python and you are able to configure your network and WIFI in order to make it work. I am available to discuss about the Vision System but for the basics, there are tons of resources on google and ArduPilot wiki is quite good http://ardupilot.org/dev/docs/ros.html

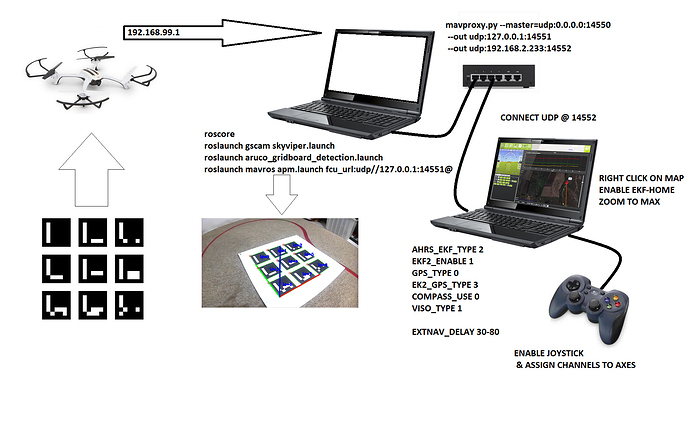

Here is the general configuration, 2 Laptops are used for this setup. First one is a Ubuntu running ROS to capture the Skyviper Camera looking down at the Aruco Grid on a GSTREAMER rstp stream that is processed with OpenCV to extract the camera pose. This topic is then transformed into MavLink compatible https://mavlink.io/en/messages/common.html#VISION_POSITION_ESTIMATE and transmitted to the vehicle using Mavros. Second Laptop running Win 10 and Mission Planner for Control using Joystick as RC Override and display vehicle position on map.

What do we need:

1- Ubuntu 16.04 and ROS kinetic desktop full installed and working

2- Gstreamer 1.x (This should preloaded with Ubuntu 16)

3- Mavros & Mavros Extra Installed

4- Ardupilot and tools installed

5-A fully working and prop balanced SkyViper in AP Mode (192.168.99.1)

BUILD & CONFIGURE SKYVIPER

a- Build Skyviper from Master:

- ./waf configure --board skyviper-f412

- ./waf build --target=bin/arducopter

b- Upload the abin file using the web interface @192.168.99.1/upgrade.html

c- Set Parameters within mission planner

d- The new build will disable usage of the original DSM transmitter (Issue Opened). You can use a USB Joystick connected to Mission Planner and configure as below (add Arm & Desarm buttons) and check that axes are OK using Radio Calibration Menu.

e- Do a test flight in manual mode to verify that all is OK

ROS

a- Using catkin tools , build a catkin_ws

b- clone @anbello github https://github.com/anbello/aruco_gridboard

c- clone gscam https://github.com/ros-drivers/gscam and set -DGSTREAMER_VERSION_1_x=On

d- catkin build and source

e- Set SKYVIPER camera resolution to 640 x 360 using the web page

f- Calibrate camera using the ROS checkerboard method and add path of the resulting camera yaml file in the gscam launch file

g- Build the ARUCO GridBoard and calibrate the layout file (3x3layout.yaml)

Test by setting the debug to True in detection launch file

Connect WIFI to SKYVIPER

- roscore

- roslaunch gscam skyviper.launch (you can chek with rqt_imageview)

- roslaunch aruco_gridboard-detection.launch

You should be able to see the image with the overlay XYZ axes and data being displayed correspond to Aruco Pose.

Then you can launch MAVROS , but we need to set mavproxy as the SKYVIPER allows for just one port (14550) opening. This is my command, it may vary depending on your setup:

mavproxy.py --master=udp:0.0.0.0:14550 --out udp:127.0.0.1:14551 --out udp:192.168.2.233:14552

You can connect Mission Planner @14552 and activate the Joystick

Launch Mavros

roslaunch mavros apm.launch fcu_url:=udp://127.0.0.1:14551@

The signal should look like this on Windows QGC Analyse Widget

Go on Mission Planner and Right Click on the Map to set EKF Home, yould see vehicle appears. Zoom to maximum

Hold the Skyviper in you hands and walk around the ARUCO Grid, you must see the vehicle move accordingly on Mission Planner Map (look at the video above).

Set MP in Loiter , ARM and takeoff with Joystick, fly over the Aruco Grid, and if the Skyviper is well tuned and has minimal vibration, it should hold over the grid with minimum drift. Voilà !!

You can adjust the Camera capture Delay with EXTNAV_DELAY (work in progress)

PLEASE NOTE: This is a draft, more information and more code will be added soon.