Hey @jeff_null, thanks for a detailed explanation here. I also wanted to know the rostopic that I need to check to see what I am getting as an output when /mavros/vision_pose/pose is sent as an input.

In case of PX4, it is /mavros/local_position/pose because there we use something called LPE (which you probably know). What rostopic should I check here?

@jeff_null are you sure that we need to have local* white listed in the mavros apm plugin file because APM does not have a local position estimation module.

The EKF-fused pose should still be published as ‘/mavros/local_position/pose’. It is on my system, at least. And I’m not 100% sure about needing the local* plugin whitelisted; I will try without it.

Hey @jeff_null, thanks for the response. Did you check if you have the local* plugin whitelisted? Please let me know.

Would you mind to share your apm_config and apm_pluginlists with me? and also the vision_position_estimate.cpp if possible.

@jeff_null in which part of the vision_pose_estimate.cpp do you set origin of the GPS coordinates. I have done it in the void initialize() function, is that okay?

Here is how it looks in my vision_pose_estimate.cpp code:

void initialize(UAS &uas_)

{

PluginBase::initialize(uas_);

mavlink::common::msg::SET_GPS_GLOBAL_ORIGIN gpo;

gpo.target_system = m_uas->get_tgt_system();

// gpo.time_boot_ms = stamp.toNSec() / 1000; #TODO: requires Mavlink msg update

gpo.latitude = 30.6197674;

gpo.longitude = -96.3414215 ;

gpo.altitude = 99.9674829152;

bool tf_listen;

// tf params

sp_nh.param("tf/listen", tf_listen, false);

sp_nh.param<std::string>("tf/frame_id", tf_frame_id, "map");

sp_nh.param<std::string>("tf/child_frame_id", tf_child_frame_id, "vision_estimate");

sp_nh.param("tf/rate_limit", tf_rate, 10.0);

old_x = old_y = old_z = old_roll = old_pitch = old_yaw = old_time = 0;

flag_var = false;

if (tf_listen) {

ROS_INFO_STREAM_NAMED("vision_pose", "Listen to vision transform " << tf_frame_id

<< " -> " << tf_child_frame_id);

tf2_start("VisionPoseTF", &VisionPoseEstimatePlugin::transform_cb);

}

else {

vision_sub = sp_nh.subscribe("pose", 10, &VisionPoseEstimatePlugin::vision_cb, this);

vision_cov_sub = sp_nh.subscribe("pose_cov", 10, &VisionPoseEstimatePlugin::vision_cov_cb, this);

}

}@jeff_null How exactly did you send the ‘mavlink::common::msg::SET_GPS_GLOBAL_ORIGIN’ message ? I think the code snippet you have posted here just sets the value for the above message type but how to send it, using what command?

I just want people to know that adding support for the vision-position-estimate is actively being worked on. We’re making progress, here’s our latest branch which basically seems to work in the simulator but needs more testing.

So, it’s getting close!

-

Yes, I have the local_position plugin whitelisted. It is responsible for publishing the ‘/mavros/local_position/pose’ topic, which is the final, EKF-fused position estimate.

-

I’ve created a new plugin file (vision_delta_estimate.cpp), as well as updating the appropriate mavros_plugins.xml and CMakeLists.txt files. I’ll be publishing these on our official company’s project github page soon.

-

I publish the origin-setting mavlink message upon receipt of the first visual pose estimation, but doing it in initialize() should work as well. The complete code to send the message is:

mavlink::common::msg::SET_GPS_GLOBAL_ORIGIN gpo; gpo.target_system = m_uas->get_tgt_system(); gpo.latitude = 37.2343 * 1E7; gpo.longitude = -115.8067 * 1E7; gpo.altitude = 610.0 * 1E3; // roughly 610m above sea level UAS_FCU(m_uas)->send_message_ignore_drop(gpo);

Thanks… please let me know the link of the github page here so that I can keep looking for the update. Infact I have made it work by doing everything you have mentioned here. Earlier it was not working because my EKF3 was disabled but now it does because I enabled the EKF3. My current problem is with sending deltas, I am not sure how to send correct delta values, I want to know the correct way of doing it.

Calculating the deltas in the correct frame of reference took me quite a while. First, please note that in vision_pose_estimate.cpp, a rotation is added to the incoming pose estimate’s rotations, but NOT the positions. Specifically, on line 126-129:

auto position = ftf::transform_frame_enu_ned(Eigen::Vector3d(tr.translation()));

auto rpy = ftf::quaternion_to_rpy(

ftf::transform_orientation_enu_ned(

ftf::transform_orientation_baselink_aircraft(Eigen::Quaterniond(tr.rotation()))));

For the deltas, remove the “ftf::transform_orientation_baselink_aircraft” call.

Then, calculating the deltas is fairly straightforward:

/// Position delta

Eigen::Vector3d delta_pos = position - prev_position;

// Rotate the position difference into the previous frame of reference

delta_pos = prev_rotation_inverse*delta_pos;

where ‘prev_rotation_inverse’ is the inverse of the previous frame’s rotation. Similarly:

/// Rotation delta

const Eigen::Affine3d cur_rotation = trs_rpy_to_matrix(Eigen::Vector3d(0,0,0), rpy);

const Eigen::Affine3d delta_rot = prev_rotation_inverse*cur_rotation;

const Eigen::Vector3d delta_rpy = quaternion_to_rpy(Eigen::Quaterniond(delta_rot.rotation()));

Put these values into the mavlink::ardupilotmega::msg::VISION_POSITION_DELTA message, and you’re good to go. (Don’t forget to put the time between your delta updates into that message’s ‘time_delta_usec’ field.

As a side note, we have a PR which adds support for the vision-position messages to ArduPilot. It’s still being reviewed but I hope it will go into master by next Monday.

cool i am waiting for it

Hey, This answer helps. Actually, could you please tell me what order of rotations did you use to conver RPY values to rotation matrix… was it Rot(Roll)*Rot(Pitch)*Rot(Yaw) or anything else?

An update on our progress, the PR mentioned above to add support for the vision-position messages is in master but two users (including @vincekurtz) found that it did not work because the system times are normally different between the companion-computer/GCS and the flight controller. This leads to the EKF ignoring the messages.

We’ve got a new PR that syncronises the clocks between the two computers and we hope this will resolve the problem. Hopefully this PR will go into master this week.

I just wanted to mention here that for me SET_GPS_GLOBAL_ORIGIN, didn’t work, I used SET_HOME_POSITION and this worked.

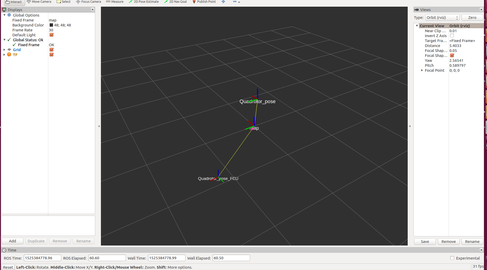

In the image attached to this comment, you can find the pose/tf published/broadcasted using visual marker localisation(Quadrotor pose) and the arducopter code(Quadrotor_pose_FCU via /mavros/local_position/pose). Any idea how can I ensure that both will be aligned, will a simple rotational and positional offset removal work?

When working with tf we generally use this tool

Thanks! I really appreciate this help.

Actually, I don’t understand where I am going wrong. Let me jot down what I am actually doing here:

- I am capturing images from a small downward looking camera on board my skyviper drone and streaming it over wifi to my ground station(ubuntu 16.04 running mavros node for apm firmware with the appropriate udp address for skyviper) which runs a ROS node to work on these images.

- The ROS node detects AruCo markers (on the ground) that the camera sees and calculates the drone’s pose with respect to a fixed self determined frame using opencv’s aruco marker detection and pose estimation algorithm.

- I publish this pose as /mavros/vision_position/pose and the mavros node running on my PC subscribes to this topic to relay it to the FCU.(for sure, I white list the vision_position and local_position plugins)

- I make some changes in the vision_pose_estimate.cpp file so that it accepts the pose updates mentioned in the step 4 and calculates position and angular deltas out of it. Infact I follow what @jeff_null has done for his project. Now these deltas are sent from the mavros node to the FCU.

- The arducopter code does some magic and sends the local position estimates to the mavros node which publishes a topic called /mavros/local_position/pose containing the local pose estimate data . I use this topic to visualize the data generated by FCU. I don’t understand why there is so much offset between the pose estimated by the aruco marker detection algorithm(/mavros/vision_position/pose) and the one estimated by FCU(/mavros/local_position/pose).

Any help will be gratefully acknowledged!

@Subodh_Mishra

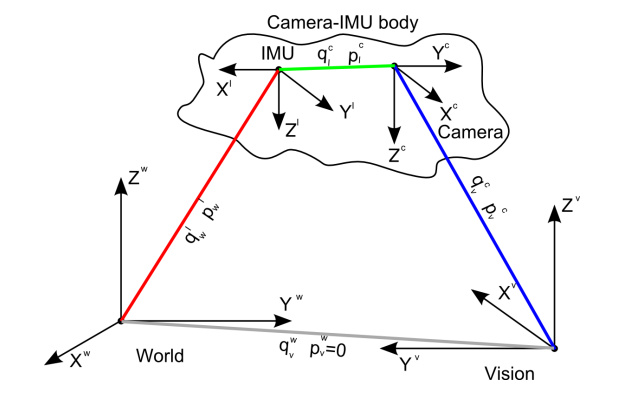

Just to make sure, do you publish a Transformation between the Camera and the IMU like this: